360Roam: Real-Time Indoor Roaming Using Geometry-Aware 360ᵒ Radiance Fields

Huajian Huang, Yingshu Chen, Tianjia Zhang and Sai-Kit Yeung

The Hong Kong University of Science and Technology

Arxiv 2022 | SIGGRAPH Asia 2022 Technical Communications

Abstract

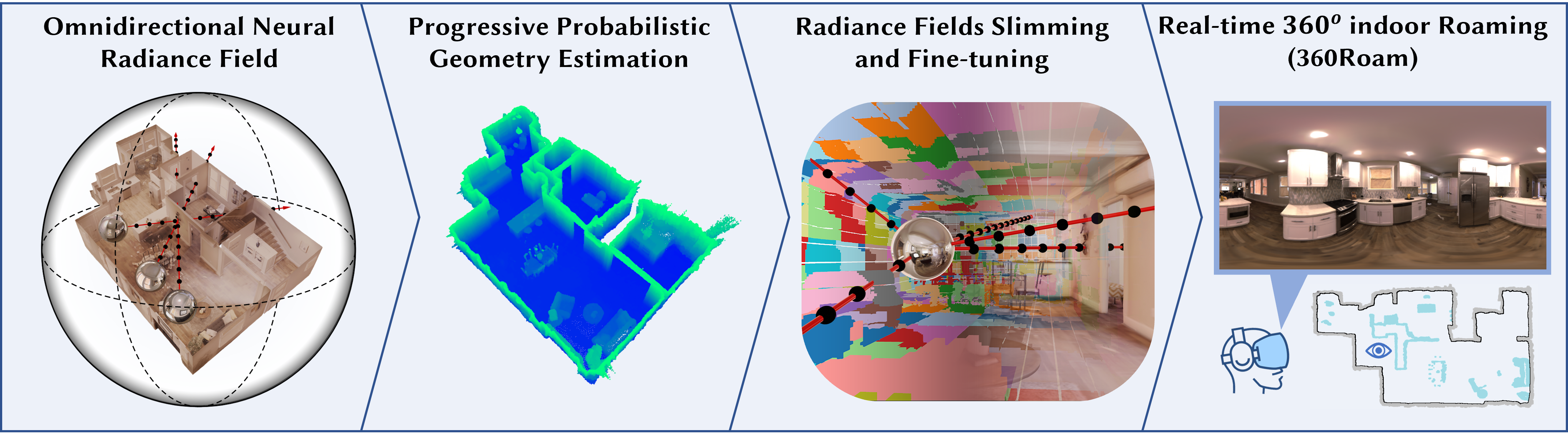

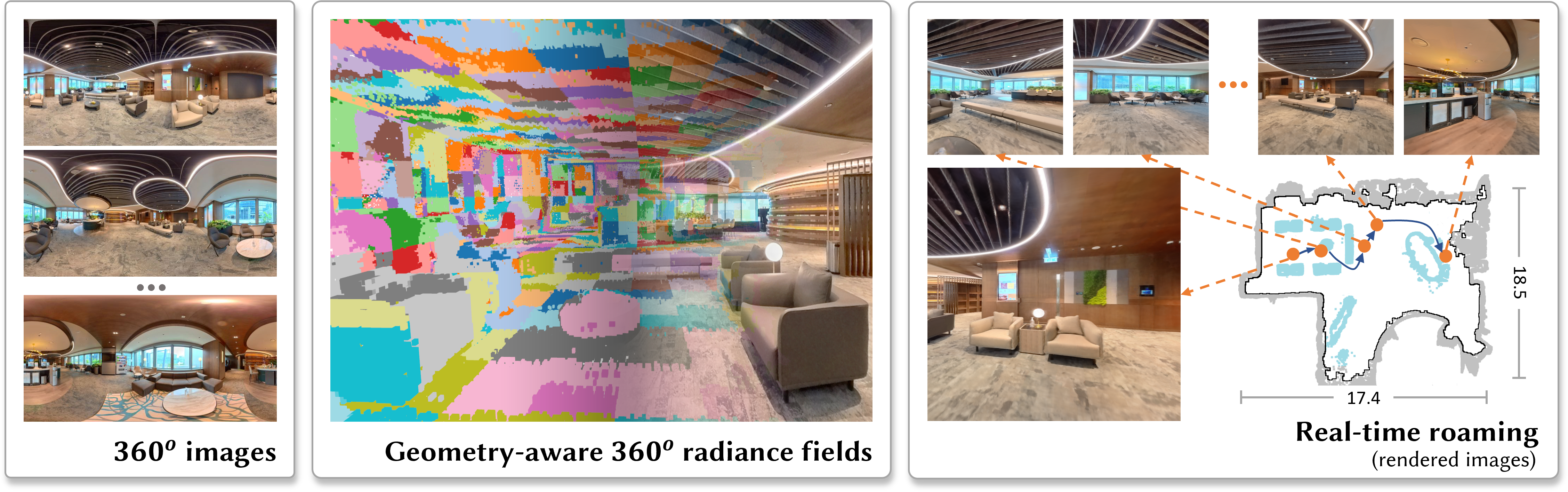

Neural radiance field (NeRF) has recently achieved impressive results in novel view synthesis. However, previous works on NeRF mainly focus on object-centric scenarios. In this work, we propose 360Roam, a novel scene-level NeRF system that can synthesize images of large-scale indoor scenes in real time and support VR roaming. Our system first builds an omnidirectional neural radiance field 360NeRF from multiple input 360ᵒ images. Using 360NeRF, we then progressively estimate a 3D probabilistic occupancy map which represents the scene geometry in the form of spacial density. Skipping empty spaces and upsampling occupied voxels essentially allows us to accelerate volume rendering by using 360NeRF in a geometry-aware fashion. Furthermore, we use an adaptive divide-and-conquer strategy to slim and fine-tune the radiance fields for further improvement. The floorplan of the scene extracted from the occupancy map can provide guidance for ray sampling and facilitate a realistic roaming experience. To show the efficacy of our system, we collect a 360ᵒ image dataset in a large variety of scenes and conduct extensive experiments. Quantitative and qualitative comparisons among baselines illustrated our predominant performance in novel view synthesis for complex indoor scenes.